Why GenAI-based coding agents are a complex domain in Cynefin - and what that means for adoption

generative AI genAI development Cynefin decision making fast flow context engineering platform strategy harness engineeringContact me for information about consulting and training at your company.

The MEAP for Microservices Patterns 2nd edition is now available

These days, tremendous energy is being poured into software delivery using GenAI-based coding agents. A key part of using coding agents successfully is context engineering - carefully crafting prompts, skills, AGENT.md files, guardrails, etc. - to guide agent behavior. This typically requires significant experimentation and iteration. A prompt that works for one model version may fail with another.

This difficulty is not accidental. Using GenAI-based coding agents to reliably create high-quality software is fundamentally different from using traditional developer tools. The relationship between prompt and outcome cannot be fully predicted in advance. Effective practices evolve as teams experiment and learn.

As a result, organizations cannot treat coding agents like conventional technologies that can be standardized through a single “one true way.” Instead, they must adopt a mindset that prioritizes safe experimentation, rapid feedback, and continuous adaptation.

This article introduces the Cynefin framework - a model for understanding different types of problem domains - and explains why using GenAI-based coding agents belongs in the complex category and what that means for platform strategy and adoption.

About Cynefin

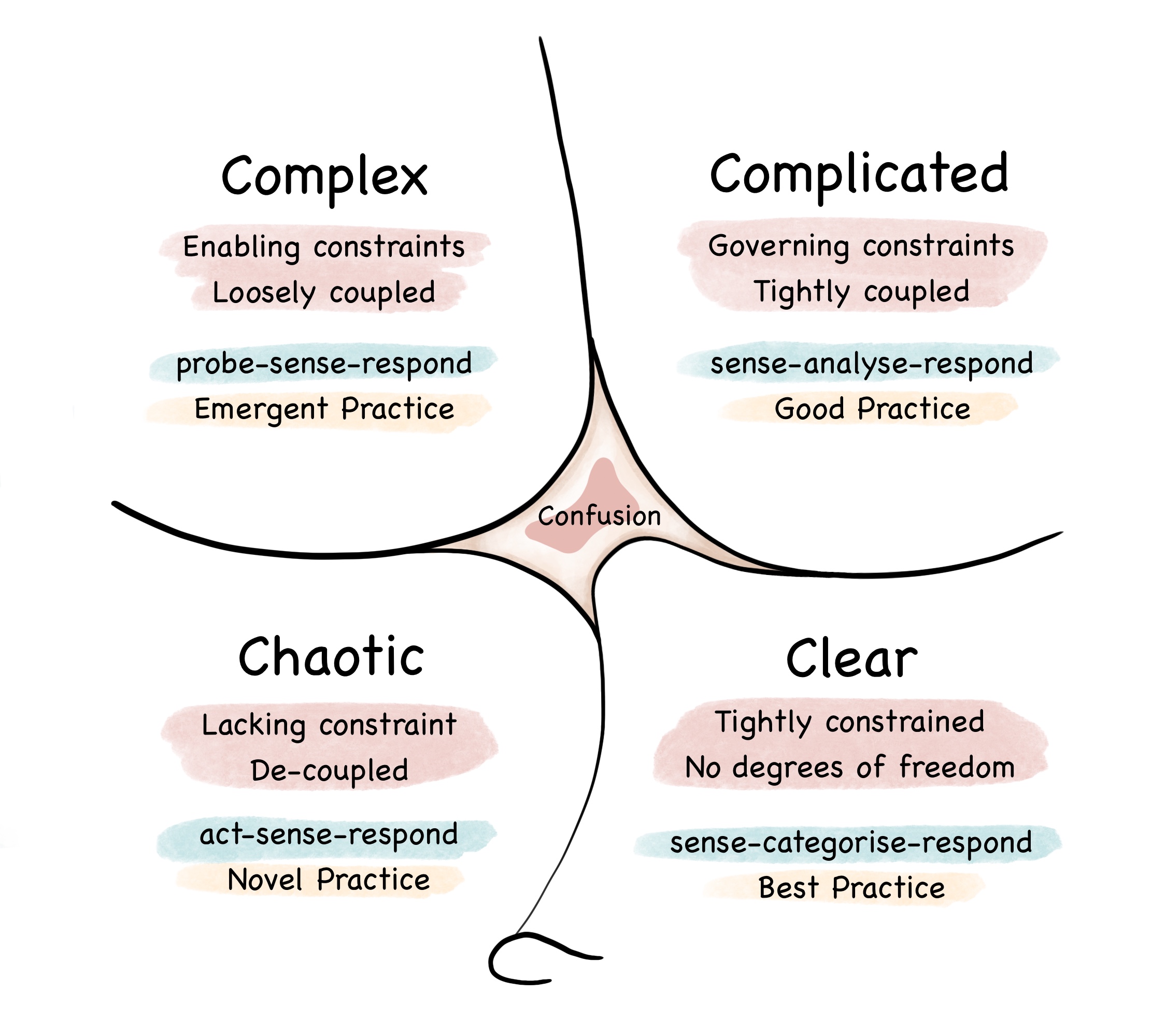

Cynefin is a framework that helps us understand the nature of the problem we’re facing and how we should respond to it. It characterizes problems as either clear, complicated, complex, or chaotic, and for each category, it prescribes a different approach to problem-solving. Cynefin distinguishes between situations where cause and effect are obvious, where they require expert analysis, where they can only be understood in retrospect, and where no perceivable order exists.

The framework is typically visualized as follows:

Let’s look at the clear, complicated, and complex domains in more detail and then see how they relate to software development in general and GenAI-based coding agents in particular.

About Clear domains

In clear domains, the relationship between cause and effect is obvious. You simply need to recognize the situation and apply the established rule or best practice.

In a clear domain, effective problem-solving requires:

- Sense - observe and establish facts

- Categorize - match it to a known pattern

- Respond - apply the established best practice

A simple CRUD-style User Profile Management subdomain can be considered a clear domain.

About complicated domains

In complicated domains, the relationship between cause and effect is knowable but not obvious. Determining the correct response requires analysis and expertise.

In a complicated domain, effective problem-solving requires:

- Sense - gather data

- Analyze - use expertise to determine cause

- Respond - apply a good practice

Income tax calculation is an example of a complicated domain: the rules are deterministic, but applying them correctly requires careful analysis and expert knowledge, due to the large number of interdependent rules, exceptions, and conditional thresholds.

About Cynefin complex domains

In a complex domain, the relationship between cause and effect can only be understood in retrospect and may not be repeated reliably. Progress requires safe-to-fail experiments rather than upfront analysis. There may not be a single optimal solution - only solutions that work better or worse in a given context.

In a complex domain, effective problem solving requires:

- Probe - run safe experiments

- Sense - observe what happens

- Respond - amplify what works, dampen what doesn’t

Urban delivery routing and scheduling is an example of a complex domain. Traffic patterns, demand fluctuations, and human behavior create emergent outcomes that cannot be fully predicted in advance, which means effective solutions require experimentation and adaptation.

Why software development is complex

Software development - the activity of building and evolving non-trivial software systems - is complex in Cynefin terms. Some individual development tasks are clear or complicated - for example, applying a framework API, configuring infrastructure, or implementing a well-understood algorithm. But architectural and design decisions must be made under uncertainty before their full consequences can be known. Defining service boundaries, choosing abstractions, and modeling the domain are therefore complex activities in their own right, since the “right” decision cannot be determined through analysis alone. Quite often the consequences of these decisions only become visible through testing, deployment, and real-world use rather than through upfront analysis. As a result, effective software development requires iterative experimentation, rapid feedback, and continuous adaptation. Except when building trivial applications, it also requires expert architectural judgment - in other words, experienced developers.

Developer tools are clear or complicated

Although software development as a whole is complex, typical developer technologies - programming languages, frameworks, build tools, databases - are usually clear or complicated domains. The relationship between cause and effect is stable and knowable. You learn the rules, the APIs, and the configuration options, and then apply them.

When problems arise, they can usually be resolved through analysis, debugging, or consulting documentation. Even when experimentation is required, the underlying behavior is governed by defined rules and produces consistent results. And, while systems built with these tools can exhibit complex behavior, the tools themselves operate according to stable and analyzable rules. What’s more, when the tools themselves are changing rapidly, they might feel even chaotic, but that’s an emotional response to change rather than an inherent property of the domain. In other words, most developer tools require expertise and analysis rather than safe-to-fail experimentation.

Using an LLM-based coding agent is a complex domain

What makes LLMs so powerful is that they can tackle tasks within complex domains - including software development. Yet this power comes with important differences. LLM-based coding agents are fundamentally different from traditional developer tools. Using such agents to reliably create high-quality software is a complex domain because the relationship between prompt and outcome cannot be fully predicted in advance. A prompt that works in one context may fail in another. Small changes in wording, context, or model version can produce disproportionately different results.

Cause and effect can only be understood in retrospect

The system does not expose a stable, fully understandable set of rules that can be exhaustively learned and applied. Because outcomes depend on the interaction between prompt wording, model configuration, tool calls, retrieved context, and the surrounding codebase, behavior arises from the system as a whole rather than from a single predictable rule set. Instead, progress requires iterative prompting, experimentation, and rapid feedback. Effective use of a coding agent therefore resembles safe-to-fail experimentation more than traditional tool usage driven by analysis and expertise.

Probabilistic nature of LLMs

This unpredictability stems from the nature of LLMs themselves. LLMs are probabilistic models that generate tokens by sampling from a probability distribution conditioned on the context. Depending on the sampling strategy and model configuration, the same prompt can yield different outputs. This probabilistic behavior helps explain why using an LLM-based coding agent is a complex domain, where cause and effect cannot be reduced to fixed, analyzable rules.

Organizations must manage the complexity of the software system and the complexity of using coding agents at the same time. Adoption therefore requires a thoughtful and deliberate approach.

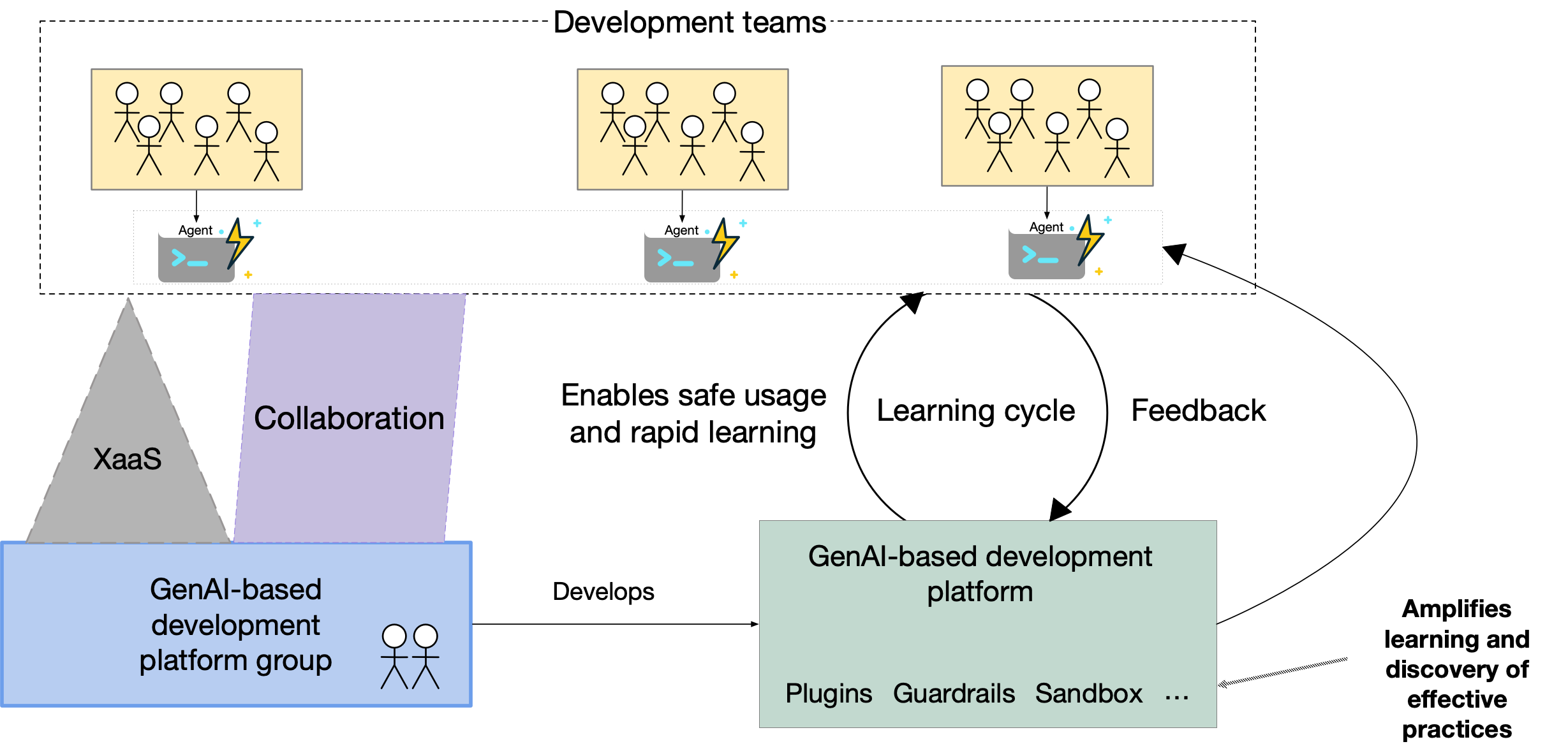

Organizations must adopt a complex-domain mindset for coding agents

When an organization adopts GenAI-based coding agents, it cannot treat them like traditional developer tools. First, it must shift engineering practices toward fast feedback and disciplined experimentation. Second, the organization must define a GenAI-based development platform - the platform that supports the use of coding agents - as a learning amplifier, not as a vehicle for enforcing a rigid “one true way.” Let’s first look at the implications for engineering practices and then explore how this complexity shapes platform strategy and governance.

Engineering practices must shift

First, if coding agents are treated instead as a merely complicated domain - essentially as more advanced automation - organizations are likely to overtrust their outputs and underinvest in feedback loops, guardrails, and human-in-the-loop checkpoints. In such a complex environment, automated testing and real-time observability must explicitly replace manual policy as the primary mechanism for governance. This, in turn, requires a fast-flow development model designed for experimentation and rapid feedback - without it, large-scale adoption of coding agents will stall.

Managing the unpredictability of outcomes

Second, the inherent unpredictability of GenAI-based coding agents requires a profound leadership mindset shift: moving away from the illusion of top-down control and toward a culture of continuous discovery. Because adoption is non-linear and success emerges through iteration rather than upfront analysis, leaders cannot rely on traditional rollout schedules or standardized mandates. Instead of attempting to eliminate variance, leaders must embrace an agile mindset that prioritizes enablement and evolutionary progress.

The platform must not act as a policy factory

Third, this complexity also shapes platform strategy and governance. In clear or complicated domains, a platform group that’s responsible for an internal development platform can act as a policy factory. It defines standards, publishes the one true way, and measures success through consistency and compliance. That approach works when cause and effect are stable and the best practices are knowable.

Because developing software with GenAI-based coding agents is a complex domain, practices do not converge on a single, fixed best practice. Prompting strategies, context engineering patterns, guardrails, and workflows evolve as teams experiment and learn. What works today may need refinement tomorrow as models, tooling, and organizational context change. The “one true way” is neither fully knowable nor stable. Although certain patterns may stabilize over time, model evolution, changing tooling, and shifting organizational context ensure that practices remain sensitive to change.

The GenAI-based development platform must amplify learning

Because reliably using coding agents operates in the Cynefin complex domain, a policy factory approach freezes learning too early. Instead, the GenAI-based development platform - the platform that supports the use of coding agents - must function as a learning amplifier. It should enable safe-to-fail experimentation, share emerging patterns, and provide guardrails. The goal of the platform and its team shifts from prescribing correctness to accelerating collective learning.

Acknowledgements

Thanks to Indu Alagarsamy for reviewing this article and providing valuable feedback.

Need help with modernizing your architecture?

I help organizations modernize safely and avoid creating a modern legacy system — a new architecture with the same old problems. If you’re planning or struggling with a modernization effort, I can help.

Learn more about my modernization and architecture advisory work →

Premium content now available for paid subscribers at

Premium content now available for paid subscribers at